My kid is thinking outside the box today.

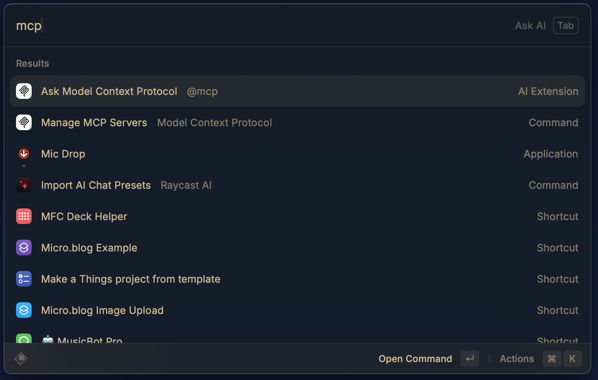

Spent some time dorking out today. Curious about all this mcp brew haha, I wired it up such that I can interact directly with my Obsidian vault by simply typing commands. Crazy stuff.

Whoa... I just made $50 as a Raycast affiliate. They offer 30% commission on all payments. All I did was make a few YouTube videos about Raycast and included my link in the description. Makes me think I should be cranking out more of those videos. I love Raycast.

Nothing like an upcoming subscription renewal to kick me into gear moving all my data into another platform. So. Tired. Of. All. The. Subs.

Took some time out this morning to leverage the helpful WippReMacro utility to create a corner camera in DaVinci Resolve complete with multiple settings that I’ve been configuring every time I create a new project.

Exporting majority of my completed video editing projects to my NAS. As one does on a Friday night. File management for the win.

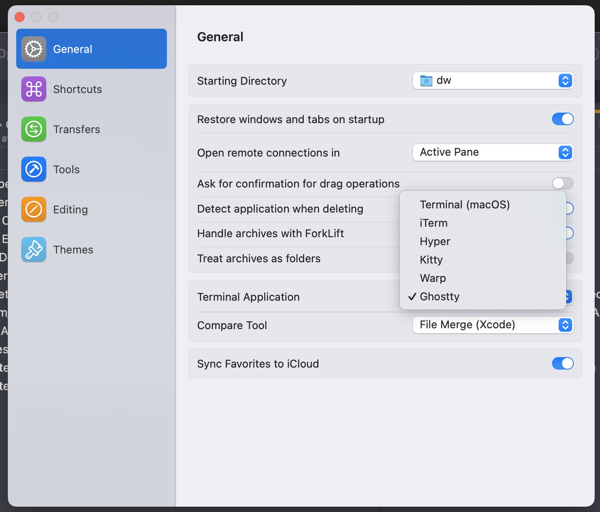

Nice. The latest Forklift update allows selection of Ghostty as your preferred terminal.

Bought my first Ebook on Bookshop.org. After the recent Amazon Kindle fiasco, I resolved to never buy another Kindle book again. I bought How Fascism Works.

Pinboard up for renewal. Do I pay for another year or finally switch to Linkding? On one hand, I like not having to think about managing yet another solution on my own. I also like using Pinboard apps on my iPhone and the ease of saving links via the share sheet.

On the other hand, it’s one more subscription. What to do? What to do?

Moved out the of basement and into the sunroom upstairs. I’m looking forward to the change of pace and being able to look out my window to see the rocky mountains. I’ve got the window open, breathing in the fresh air, and feeling good.

Not spending any money today during this economic blackout. I encourage you to not spend any money either.

Cleaning up subtitles in DaVinci Resolve. It’s for an hour long interview that I’m helping out with. First time working with subtitles and I’m thankful to have this opportunity under my belt. Nothing complicated but good to get hands-on experience.

I was able to download all of my Kindle books. I’ll never buy a Kindle book again.

Setup an n8n instance on Railway and assigned a custom domain. I think I’m done with Zapier and Make.com at least for my own needs. I still get paid by clients to work with those other platforms but going forward, my energy is going into n8n.

Making New Year’s Breakfast, a video I created this morning to welcome 2025.

Canceled my Adobe Premiere Pro trial with one day left. I can’t stand Adobe and felt at odds with myself the whole time I’ve been using it. I admit that I did like Premiere Pro but I’m going all in on DaVinci Resolve.

Swapped out my Canon M50 for an iPhone and I can barely tell the difference. Much less gear now and I like that.

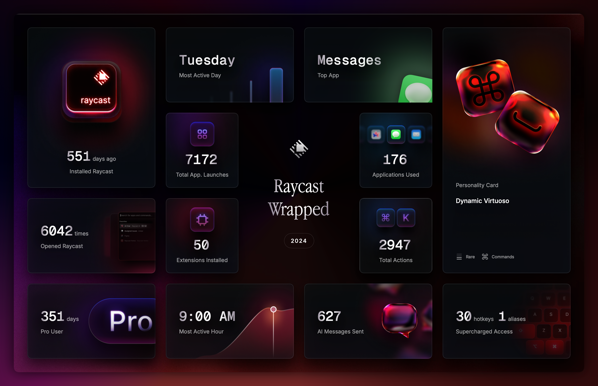

Raycast Wrapped for 2024.

I started delivering for Uber Eats today and recorded a video at the end of the day summarizing my thoughts. youtu.be/NKKg8Kthj…

If you are anything like me, you’d want to use an SSL certificate from Let’s Encrypt so that you can access your Proxmox server via a fully qualified domain name, am I right?

Well, I recently switched from Cloudflare to Namecheap and had to figure out how to reconfigure things so I made a how to video.